You see me, but you don't get me.

Social networks are becoming increasingly complex to analyze and interpret, including through listening to online conversations enhanced by artificial intelligence.

Tuesday is Alive In Social Media day :) Welcome to the new subscribers. This week, a dense and technical topic that will nevertheless be at the heart of the upcoming major elections and public debates: understanding online conversations.

Dominique Lahaix, CEO of eCairn, has published an important post entitled "Social Listening's Endgame: Navigating a Future Beyond Obsolescence". In other words: the way we used to listen to online conversations is becoming obsolete, which calls for a rethink of how we attempt to understand people.

From observational work to a question-and-answer logic with artificial intelligence

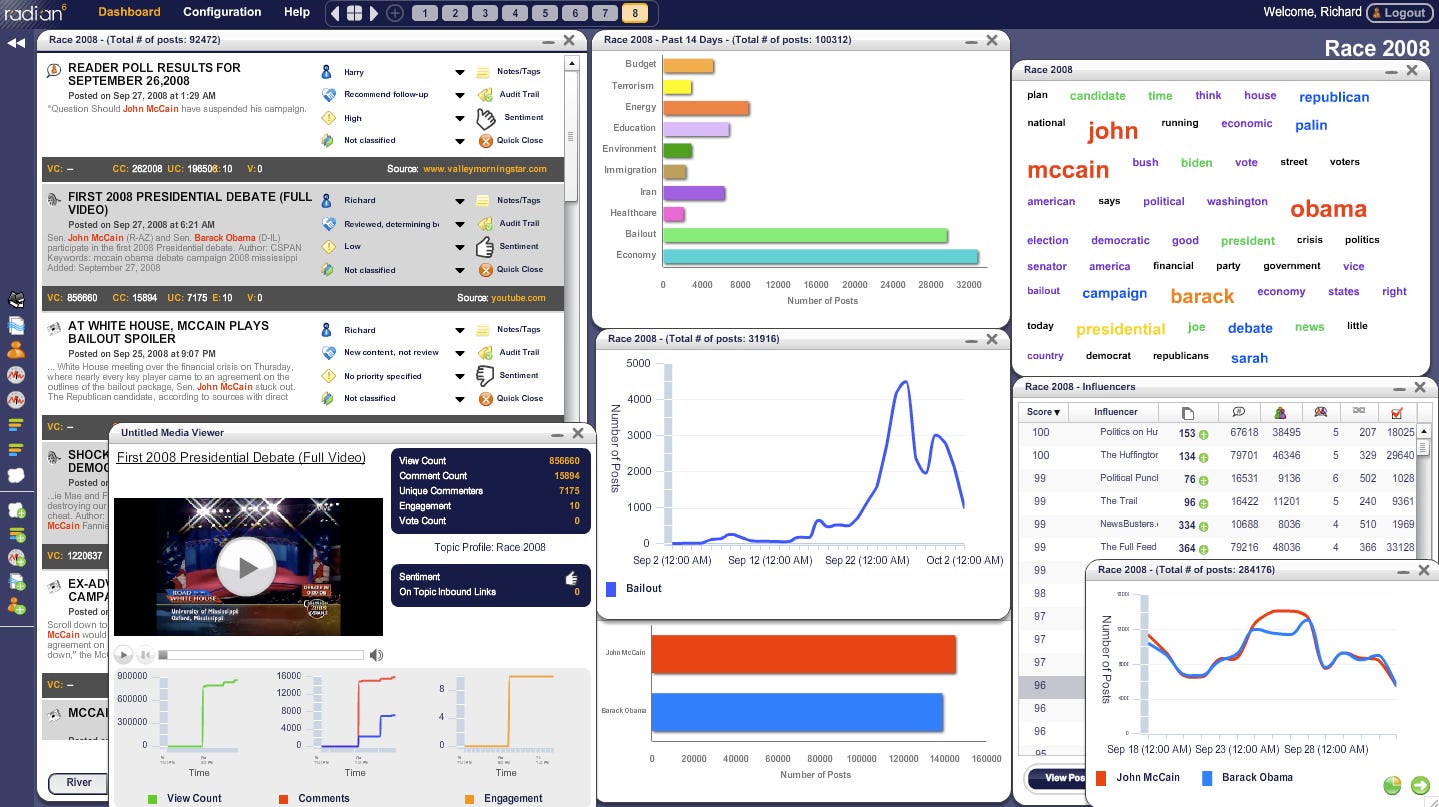

The first platforms for listening to online conversations date back to 2005-2006. Tools like Radian6 emerged, which consisted of creating dashboards based on semantic queries. Simply put: if I wanted to know whether people preferred a pink Louis Vuitton dress or a blue Louis Vuitton dress, I would enter into Radian6 "Louis Vuitton + dress + pink" and "Louis Vuitton + dress + blue". The tool then allowed for the modeling of associated keyword clouds, curves showing the evolution of these topics over time, and quite detailed comparisons.

In the early 2000s, online conversations were less abundant, and platforms were paradoxically more open: analysts were able to extract significant and "real" amounts of information and signals to form an interpretative basis. Even more interesting in the case of eCairn: we could dynamically view communities (especially blogs with outgoing and incoming links) through maps where gravity centers visually emerged (we still can, but on very few platforms).

This effort never completely had the necessary impact on decision-makers, except perhaps for those who saw an interest in manipulating opinion (the Cambridge Analytica scandal remains a marker of an era). It had the advantage of fostering debate on data, on the relevance of conclusions. In short, because it was a science where a human fundamentally had to ask questions, the resulting debates were often richer than the tables or graphs offered by tools.

The impact of artificial intelligence on this work of listening to online conversations is, in my opinion, dangerous. As Dominique Lahaix explains in his post, users do not often question the directions proposed by Google Maps, and only a few engineers delve deeply into the technology and data points. Tomorrow, these applications will rely even more on AI.

In the case of listening to online conversations, the fact that we do not so much challenge the conclusions of the tool and take them as solid guidelines could be worrying.

Future ChatGPT or Mistral systems will gradually transform the role of analyzing online conversations into 3 categories:

People in charge of data quality (to ensure that AI absorbs the right channels)

People in charge of tool setup and correction

Analysts themselves, who instead of configuring the tool will become more or less seasoned users. We already see this: users are taught to improve their "prompts," not necessarily to correct the tool.

This poses a significant problem: the analyst will ultimately spend time asking questions to an AI to build their strategy, rather than clearing the battlefields. The answers provided may tend to favor ready-made thinking rather than questioning a subject or behavior.

Is the human, this formidable mess, the best guardian against AI?

The Panoptykon Foundation published a summary in 2019 of the 3 layers of our digital identities (you can open the image to enlarge it, it's fascinating).

The elements we share (the only ones we can somewhat control): our statuses and contents on social networks, blocked contacts, our languages...

Our behaviors in digital environments (browsing a website, distance between different connected devices...)

What machines think of us (our relationship status, our physical condition, etc.)

It is on this last aspect that AI risks making significant errors. If TikTok fiercely masters the principles of concatenation, there is a difference between being able to engage audiences (the famous hook of advertisers) and... truly understanding them. In short, concatenation allows for grouping users in real-time into sides and feeding them with content.

"Instead of categorizing content in a traditional way (for example: cooking, fashion, cats, etc.), TikTok invents its own categories, its own 'sides'. In other words, TikTok continuously analyzes emerging sequences of actions, then suggests them to users who resemble those who initiated these sequences. TikTok acknowledges a margin of error in this system and corrects in real-time the criteria and audiences to whom to serve this content, increasingly accurately. Human creativity being limitless, users find themselves working for the network by offering these logical sequences; content creators detecting an opportunity in turn find themselves creating content for these new logical sequences. Logical sequences that often hit the mark, either in tapping into people's deepest feelings or pre-existing cognitive biases."

A major lesson from 20 years of listening to online conversations is that a human does not talk about all subjects, and does not always seek them out. When we observe the volume of searches for trends that are nevertheless publicized, they reach only a few thousand queries worldwide at most. An artificial intelligence could consider a deep desire as marginal. Humans build thought through real conversation; admittedly, it increasingly takes place via instant messaging, where groups invent their own secret gardens, their own languages, symbols, and emotions. But no AI (nor social listening tool) enters there entirely without authorization.

Humans often need assistance to formulate the continuation of their idea or thought, especially on intimate or new subjects. This is probably where there are still safeguards to build: if AI remains entangled in playing with (fortunately) incomplete data from the past, then it can continue to see and hear us without fully understanding us. If, on the other hand, we allow it to build on our latent demands and express them through it, we will provide explosive material for less noble governments or organizations. And give away our digital liveness.

You see me, but you don't understand.

Notion of the week: speed-watching

Speed-watching is a behavior that consists of watching video content (movies, series...) constantly but at an accelerated speed.

According to YouTube, users are increasingly consuming videos at an accelerated pace (1.5x the normal speed). This corroborates what we advance on this Substack: since we have reached a limit in terms of time spent online, the only way is to intensify usage.

Impressive links

The Study With Me trend on YouTube (notably) is exploding. Analysis on YouTube's blog

On the New York Times side, a study explains for the first time the phenomenon of deathbed visions that occur before imminent death.

Have a great week! And feel free to share this newsletter, like, comment, or continue to send me emails: these notifications are a joy.